Mind Chimes

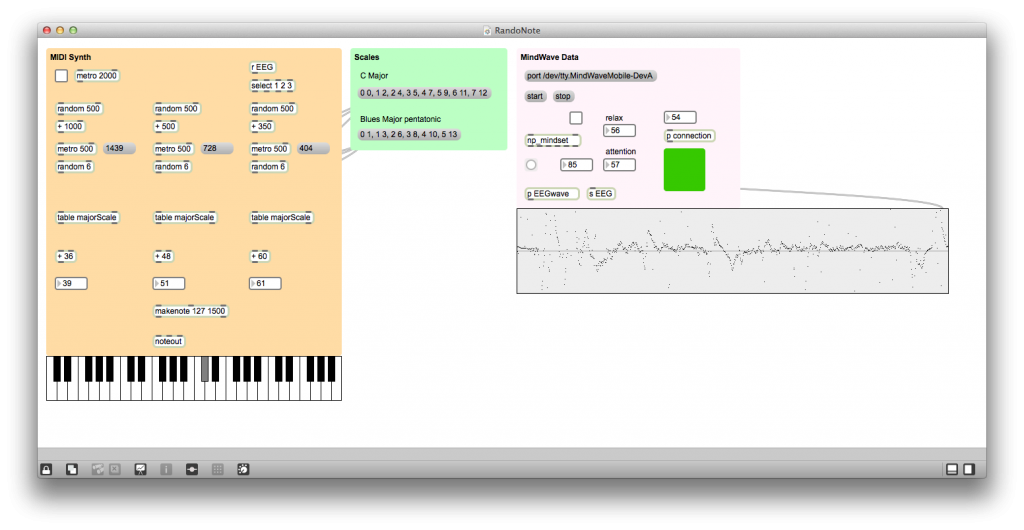

Today I debuted my new interactive dome piece, Mind Chimes at ARTS Lab, UNM. The piece generates visuals and music from a live brainwave feed captured by a NeuroSky MindWave Mobile headset. I coded the entire piece with MaxMSP and used vDome, an open source…

Read More