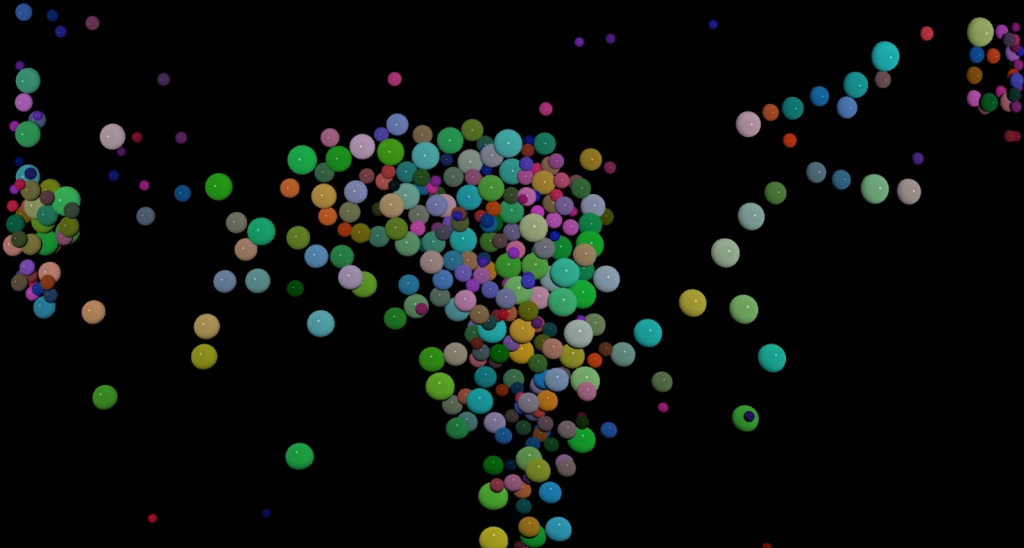

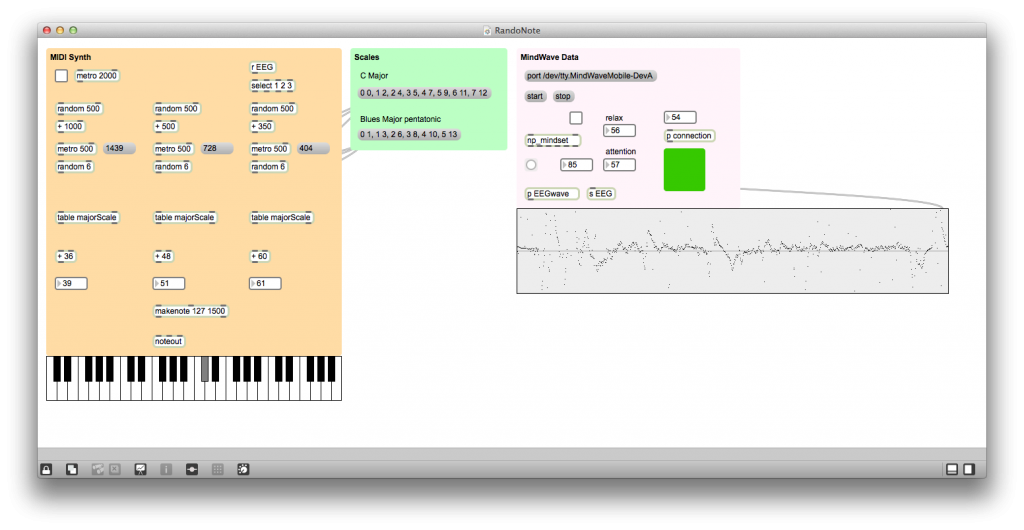

Sound reactive visuals with MaxMSP

I created this Max patch as a test for sound reactive visuals. I use jitter physics to give the balls mass in the virtual world and then map ghost objects at the bottom of the world to impulse based on the sound coming in or…

Read More